📄 ELK 日志采集使用 _ PIGCLOUD

](https://www.pig4cloud.com/)

产品

商业版

生态🔥

📄 ELK 日志采集使用

pigcloud

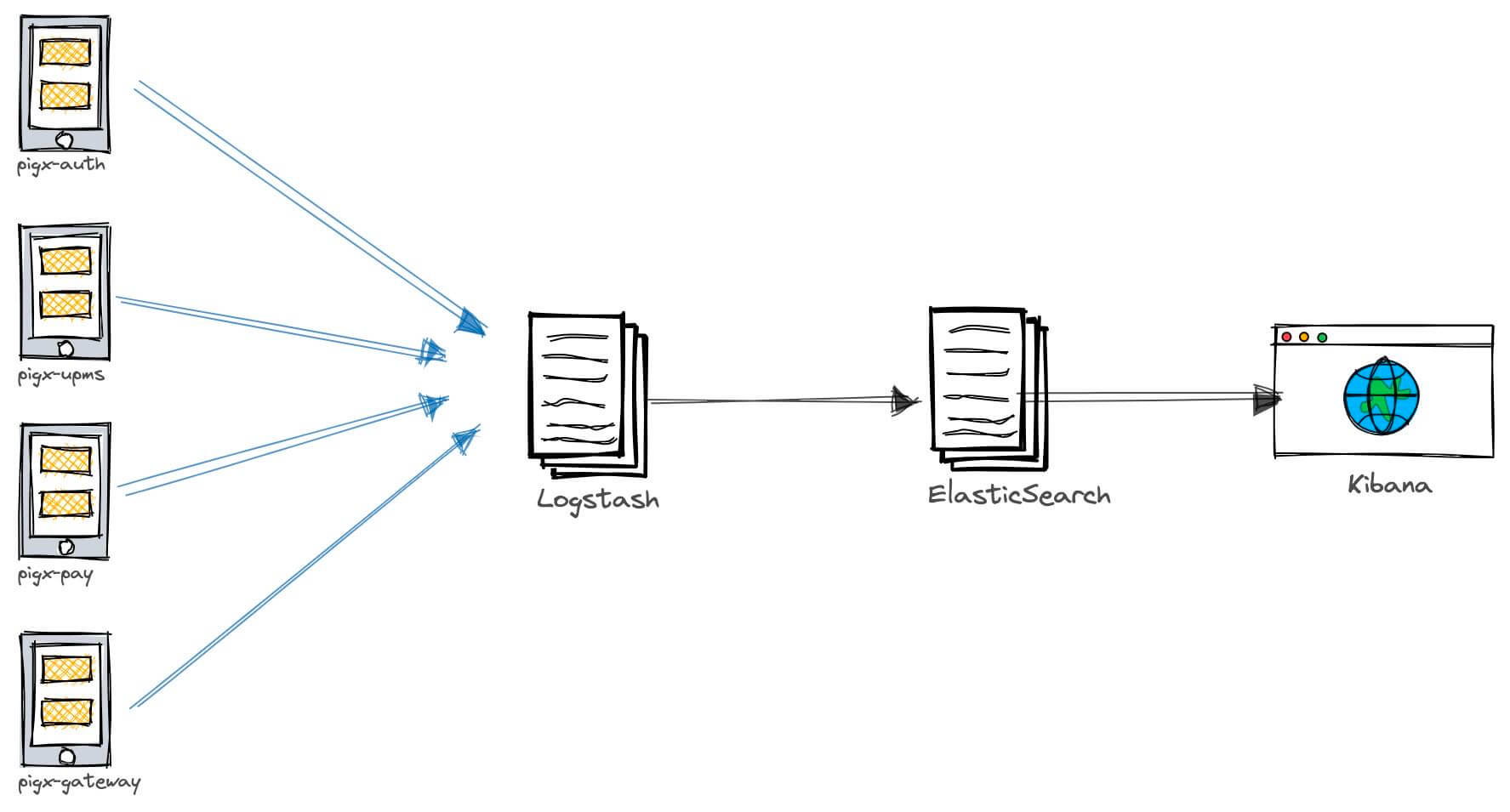

ELK 即 Elasticsearch、Logstash、Kibana,组合起来可以搭建线上日志系统,在目前这种分布式微服务系统中,通过 ELK 会非常方便的查询和统计日志情况.

本文以 pigx 的 upms 模块为例

# ELK 中各个服务的作用

- Elasticsearch:用于存储收集到的日志信息;

- Logstash:用于收集日志,应用整合了 Logstash 以后会把日志发送给 Logstash,Logstash 再把日志转发给 Elasticsearch;

- Kibana:通过 Web 端的可视化界面来查看日志。

# 使用 Docker Compose 搭建 ELK 环境

# 编写 docker-compose.yml 脚本启动 ELK 服务

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

| version: '3'

services:

elasticsearch:

image: elasticsearch:6.4.0

container_name: elasticsearch

environment:

- "cluster.name=elasticsearch"

- "discovery.type=single-node"

- "ES_JAVA_OPTS=-Xms512m -Xmx512m"

volumes:

- /mydata/elasticsearch/plugins:/usr/share/elasticsearch/plugins

- /mydata/elasticsearch/data:/usr/share/elasticsearch/data

ports:

- 9200:9200

kibana:

image: kibana:6.4.0

container_name: kibana

links:

- elasticsearch:es

depends_on:

- elasticsearch

environment:

- "elasticsearch.hosts=http://es:9200"

ports:

- 5601:5601

logstash:

image: logstash:6.4.0

container_name: logstash

volumes:

- /mydata/logstash/upms-logstash.conf:/usr/share/logstash/pipeline/logstash.conf

depends_on:

- elasticsearch

links:

- elasticsearch:es

ports:

- 4560-4600:4560-4600

Copied!

|

# 创建对应容器挂载目录

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

| mkdir -p /mydata/logstash

mkdir -p /mydata/elasticsearch/data

mkdir -p /mydata/elasticsearch/plugins

chmod 777 /mydata/elasticsearch/data

Copied!

|

# 编写日志采集 logstash

在 /mydata/logstash目录创建 upms-logstash.conf

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

| input {

tcp {

mode => "server"

host => "0.0.0.0"

port => 4560

codec => json_lines

}

}

output {

elasticsearch {

hosts => "es:9200"

index => "upms-logstash-%{+YYYY.MM.dd}"

}

}

Copied!

|

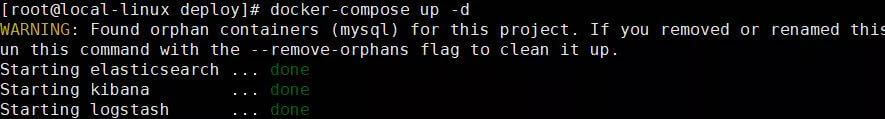

# 启动 ELK 服务

在docker-compose.yml 同级目录执行 docker-compose up -d

注意:Elasticsearch 启动可能需要好几分钟,要耐心等待。

# logstash 安装 json_lines 格式插件

1

2

3

4

5

6

7

8

9

10

11

12

13

14

|

docker exec -it logstash /bin/bash

cd /bin/

logstash-plugin install logstash-codec-json_lines

exit

docker restart logstash

Copied!

|

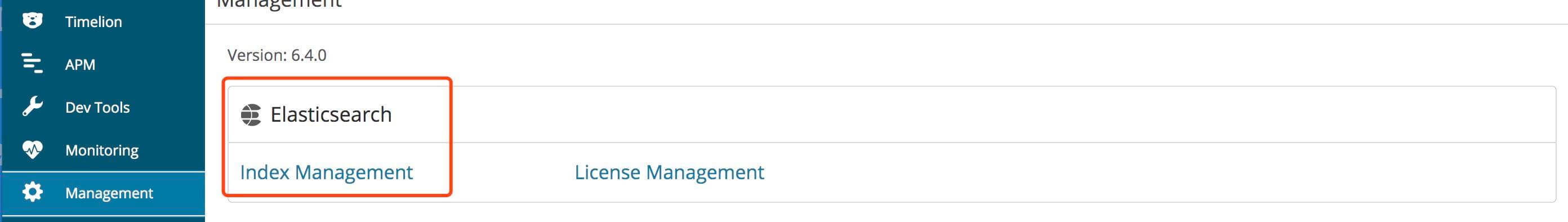

# 访问宿主机 5601 kibana

# pigx 服务整合 Logstash (以 UPMS 模块为例)

# 添加 pom 依赖

1

2

3

4

5

6

7

8

9

10

11

| <!--集成logstash-->

<dependency>

<groupId>net.logstash.logback</groupId>

<artifactId>logstash-logback-encoder</artifactId>

<version>5.3</version>

</dependency>

Copied!

|

# logback-spring.xml 新增 appender

1

2

3

4

5

6

7

8

9

10

11

12

13

14

| <!--输出到logstash的appender-->

<appender name="LOGSTASH" class="net.logstash.logback.appender.LogstashTcpSocketAppender">

<!--可以访问的logstash日志收集端口-->

<destination>192.168.0.31:4560</destination>

<encoder charset="UTF-8" class="net.logstash.logback.encoder.LogstashEncoder"/>

</appender>

<root level="INFO">

<appender-ref ref="LOGSTASH"/>

</root>

Copied!

|

# 启动 pigx 在 kibana 中查询日志

# 同时采集多个模块日志

每个应用发送到不同的TCP 端口 这里注意logstash 的容器端口映射增加

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

| input {

tcp {

add_field => {"service" => "upms"}

mode => "server"

host => "0.0.0.0"

port => 4560

codec => json_lines

}

tcp {

add_field => {"service" => "auth"}

mode => "server"

host => "0.0.0.0"

port => 4561

codec => json_lines

}

}

output {

if [service] == "upms"{

elasticsearch {

hosts => "es:9200"

index => "upms-logstash-%{+YYYY.MM.dd}"

}

}

if [service] == "auth"{

elasticsearch {

hosts => "es:9200"

index => "auth-logstash-%{+YYYY.MM.dd}"

}

}

}

Copied!

|

📄 prometheus+grafana 监控使用 📄 实践分享说明